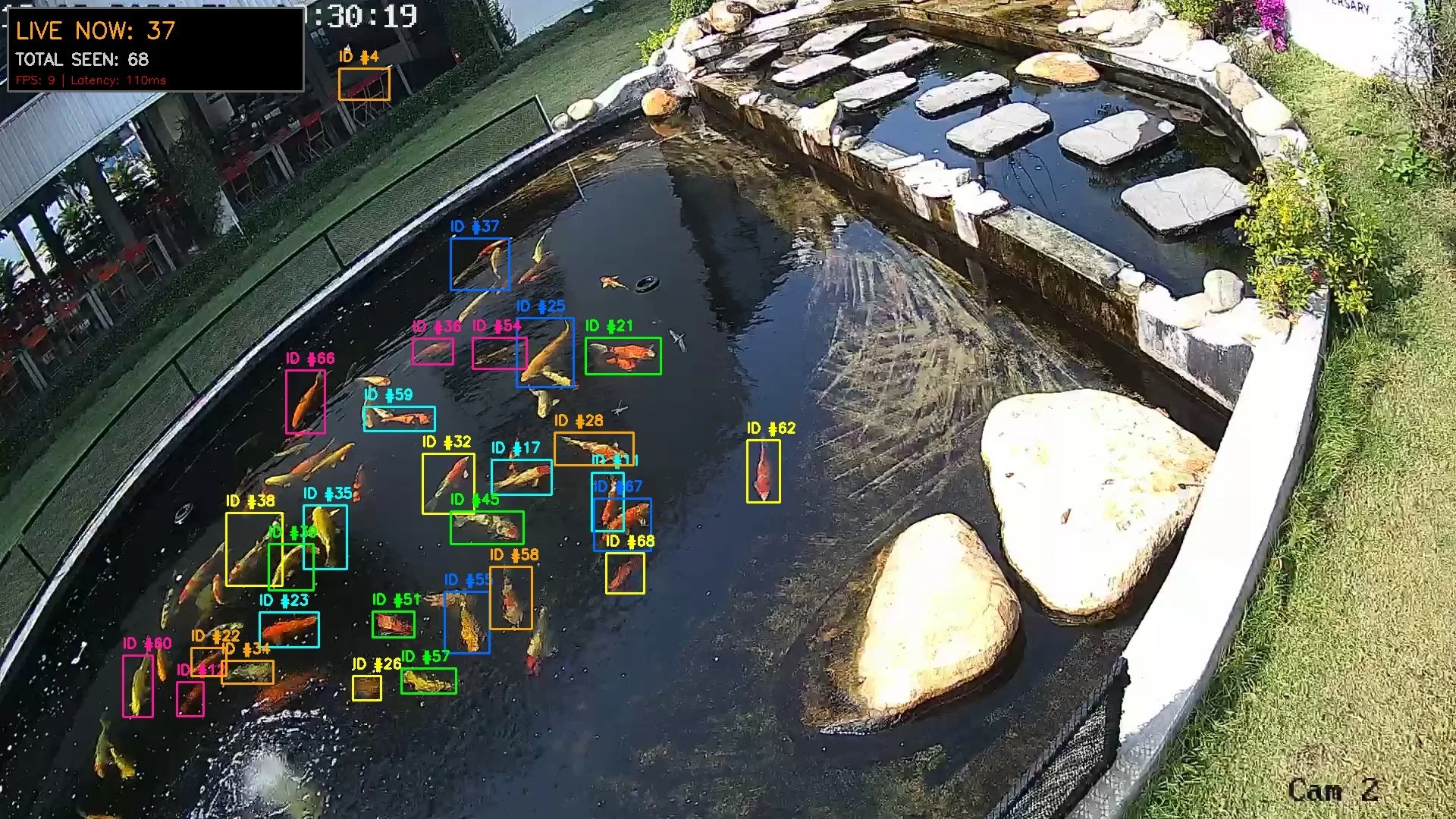

Developed a computer vision model utilizing neural networks to autonomously track and count Koi fish in a dynamic pond environment.

The project was initiated to modernize existing monitoring processes by transitioning from manual observation to a real-time, automated tracking solution.

hardware setup

To support the real-time AI systems, this project required designing and deploying a robust, on-site hardware infrastructure. The core setup consisted of:

Cisco 350 Series Switch: Configured to provide reliable network connectivity and Power over Ethernet (PoE) to streamline the camera array setup.

Hikvision IP Cameras: Strategically deployed across the site to capture continuous, high-definition video feeds, enabling the accurate counting and tracking of Koi fish within the pond.

High-End NVIDIA Workstation: Served as the central compute node, offloading the intensive AI models to execute real-time object detection and multi-object tracking without latency.

software setup setup

To bring this computer vision pipeline to life, I established a comprehensive software stack and simulated infrastructure environment. This ensured the AI models were accurately trained, highly optimized, and perfectly calibrated for real-world deployment before any physical installation took place. The core software architecture included:

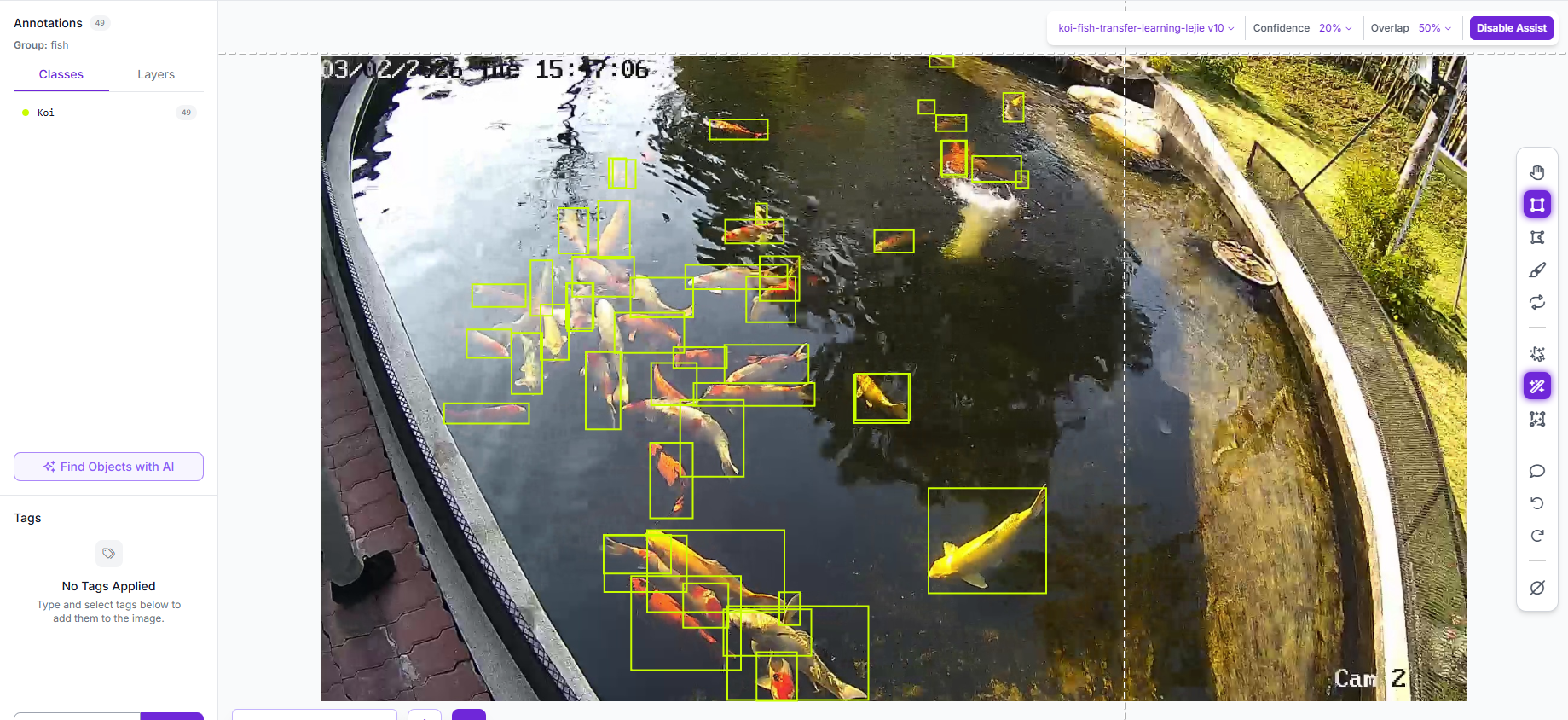

Roboflow (Dataset Management & Annotation): This platform served as the backbone for our data curation pipeline. I utilized its advanced annotation tools to process and label complex aquatic imagery. To drastically accelerate the workflow, I leveraged model-assisted labeling—using pre-trained weights to automate initial bounding boxes before fine-tuning them for our custom Koi tracking model.

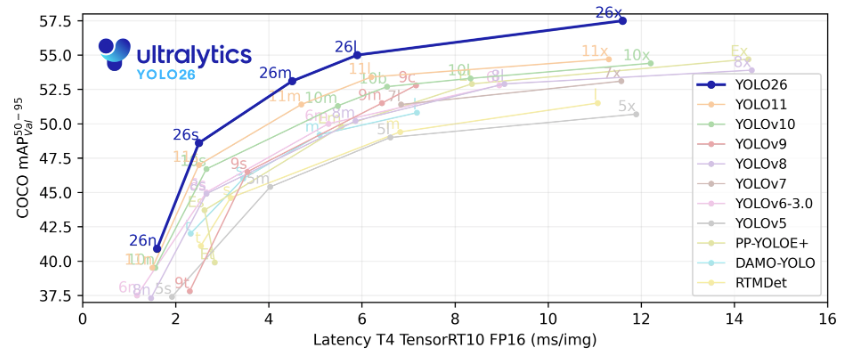

YOLOv26 (Real-Time Object Detection Engine): I selected YOLOv26 as our primary computer vision architecture because of its state-of-the-art balance of high detection accuracy and ultra-low inference times. Its optimized model capacity enabled the system to seamlessly process high-resolution video feeds and accurately identify fast-moving, overlapping fish in real time without dropping frames.

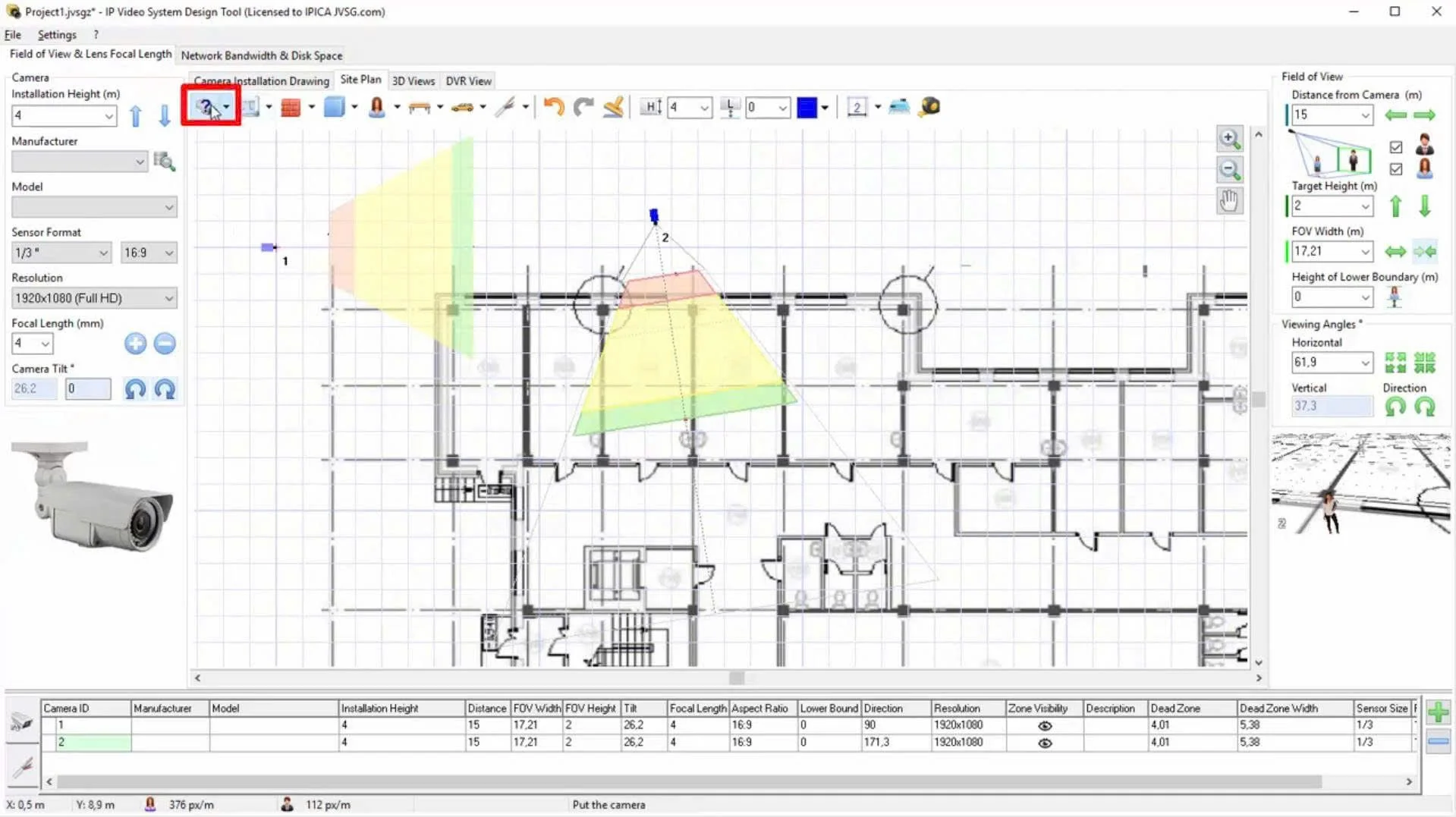

IP Video System Design Tool (Infrastructure Simulation): Before mounting a single physical camera on-site, I used this software to build a highly accurate digital twin of the pond environment. This allowed me to simulate various hardware placements, test different camera models, and strictly calculate the Pixels Per Meter (PPM) at different distances. This meticulous pre-planning guaranteed that the network would capture the exact visual fidelity required for the AI to perform reliably.

FINAL RESULTS AND

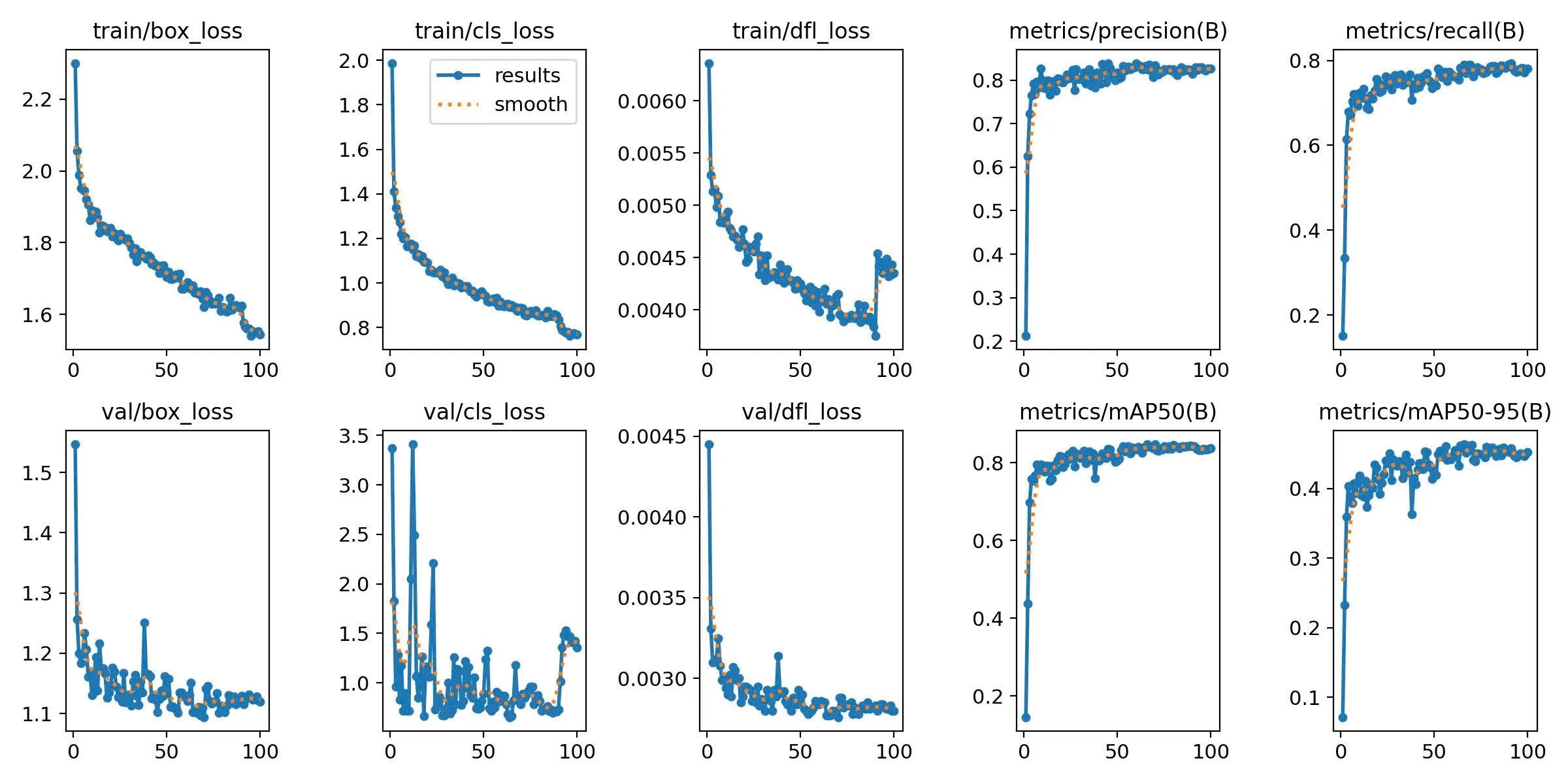

MODEL’S PERFORMANCE

As I finalize this comprehensive summary of the Koi fish tracking and counting project, I reflect on the significant journey that culminated in a final tracking accuracy of 87%. This project has been an invaluable learning experience, providing me with a robust set of advanced technical skills. The most formidable challenge I encountered lay in bridging the gap between the theoretical optimal and the complex practical reality. Theoretically, model simulations suggested multiple ideal camera installation locations that would guarantee both maximum coverage area and the necessary pixel-per-meter resolution for high-fidelity tracking. However, when transitioning to the real-world deployment in the pond, environmental factors—specifically the continuous stream of bubbles generated by the aerator (the "bump") and other dynamic elements—created interference that compromised these theoretically ideal setups, making them unattainable in practice. Despite these hurdles, navigating the discrepancy between theory and application forced innovative problem-solving and significantly deepened my expertise. I am proud to have advanced my capabilities in image processing and neural network model training, culminating in the successful development and implementation of an advanced LPDSORT object tracking model customized specifically for tracking and counting Koi fish in dynamic aquatic environments.